The Linux SG driver version 4.0

2 Changes to sg driver between version 3.5.36 and 4.0

2.1 Deprecated or removed features in version 4.0 compared to earlier version

3 SCSI Generic versions 1, 2, 3 and 4 interfaces

3.1 Comparison of fields in the v4 and v3 interfaces

3.2 Flags in v3 and v4 interfaces

4 Architecture of the sg driver

4.2 Direct, mmap()-ed IO and bio_s

9 Sharing design considerations

10.2 Aborting multiple requests

10.3 Single/multiple (non-)blocking requests

13 Bi-directional command support

The SCSI Generic (sg) driver in Linux passes SCSI commands, optionally with data (known as data-out), to a SCSI device, and receives back from that device: SCSI status, sense data (if the status is "bad") and optionally data (known as data-in). It is the pass-through driver of the Linux SCSI subsystem. The sg driver does not own SCSI devices in the normal fashion in which many OS drivers have exclusive control over a class of devices. In most cases the sg driver shares control of SCSI devices with other SCSI sub-system upper level drivers such as sd (for disks), st (for tape drives), sr (for DVD/CDs) and ses (for SCSI Enclosure Services devices). Some disk array drivers choose to make their (physical) disks visible to the sg driver and not visible to the sd driver; the reason is to disallow direct data access to those disks while allowing tools like smartmontools to monitor the metadata of those disks (e.g. their temperature and endurance/lifetime metrics (for SSDs)) via the sg driver nodes.

The sg driver has been present since version 1.0 of the

Linux kernel in 1992. In the 29 years since then the driver has had 3

interfaces to the user space and now a fourth is being added. The

first and second interfaces (v1 and v2) use the same header: 'struct

sg_header' with only v2 now fully supported. The "v3"

interface is based on 'struct sg_io_hdr'. Both these structures are

defined in include/scsi/sg.h the bulk of whose contents will move to

include/uapi/scsi/sg.h as part of this upgrade. Prior to the changes

now proposed, the "v4" interface is only implemented in the

block layer's bsg driver. The Block SCSI Generic (bsg)

driver has been present in Linux for around 15 years . The bsg

driver's user interface is found in include/uapi/linux/bsg.h . These

changes propose adding support for the "v4" interface via

ioctl(SG_IO) for

synchronous use, and adding the new ioctl(SG_IOSUBMIT)

and ioctl(SG_IORECEIVE)

for asynchronous/non-blocking use. The plan is to deprecate and

finally remove (or severely restrict) the write(2)/read(2)

based asynchronous interface used currently by the v1, v2 and v3

interfaces. The v3 asynchronous interface is supported by the new

SG_IOSUBMIT_V3

and SG_IORECEIVE_V3

ioctl(2)s .

If

the driver changes are accepted, the driver version, which is visible

via an ioctl(SG_GET_VERSION_NUM),

will be bumped from 3.5.36 (in lk 5.13) to 4.0.x . The opportunity is

being taken to clean up the driver after 20 years of piecemeal

patches. Those patches have left the driver with misleading variable

names and comments that don't match the adjacent code. Plus there are

new kernel facilities that the driver can take advantage of. Also of

note is that much or the low level code once in the sg driver (and

remnants remain) have been moved to the block layer and the SCSI

mid-level. This upgrade has been done as a two stage process: first

clean the driver up, remove some restrictions and reinstate some

features that have been accidentally lost. The first stage also adds

basic v4 interface support using the ioctl(SG_IO) for

sync/blocking usage; and ioctl(SG_IOSUBMIT)

and ioctl(SG_IORECEIVE) for

async/non-blocking usage.

Note that the Linux block layer implements the synchronous sg v3 interface via ioctl(SG_IO) on all block devices that use the SCSI subsystem, directly or indirectly. Indirect uses include via command set translation (e.g. SATA disks use libata which implements the T10 SAT standard; see https://www.t10.org/ ) and the USB subsytsem's mass storage class (and more recently the UAS protocol). In pseudocode an example like this: ' ioctl(open("/dev/sdc"), SG_IO, ptr_to_sg_io_hdr)' works as expected. This is not implemented by the sg driver so it is important that the sg driver's implementation of ioctl(SG_IO) remains consistent with other driver implementations (mainly the one found in block/scsi_ioctl.c kernel source code).

A recent first stage patchset (containing 45 patches) was sent to the linux-scsi list on 20210523. Its cover was titled: "[PATCH v19 00/45] sg: add v4 interface". Patch 45 bumps the driver version number to 4.0.12 . The sgv4_20210804 patchset below combines the first and second stage patchsets. Its first stage patchset contains 46 patches. Its second stage patchset adds the new features such as file and request sharing, multiple requests (in one invocation) and supports and the so-called extended ioctl(2). The second patchset is only currently available from this page (as patches 0047 to 0085 applied on top of the first stage). The second stage bumps the driver version number to 4.0.47 . See Downloads and testing section below.

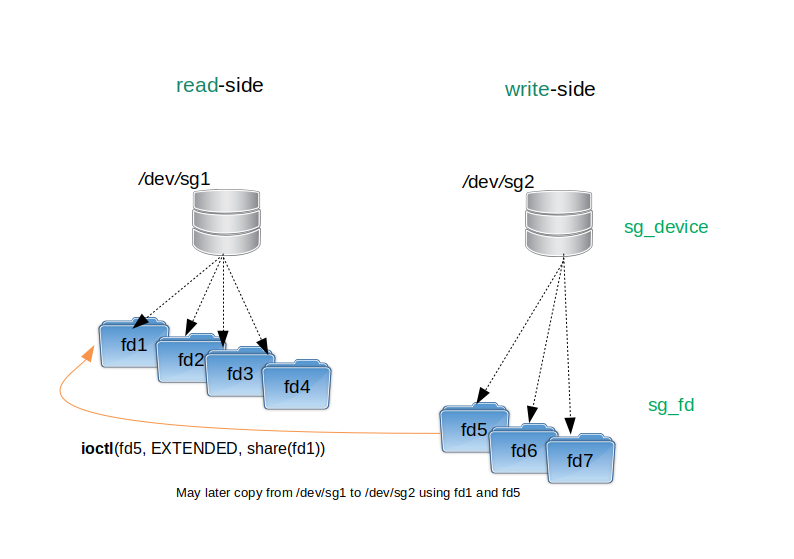

In keeping with a new Linux kernel coding style directive, the terms master and slave have been replaced by read-side and write side respectively. These terms refer to the two sides of a Request share which is explained in a later section. One aspect lost with this new terminology is that the read-side is the leader (i.e. comes first) and the write-side is a follower. And usually the write-side is dependent on the read-side succeeding.

The sg driver version number is visible to the user space via ioctl(SG_GET_VERSION_NUM) and in procfs via 'cat /proc/scsi/sg/version'. The version number has been stable at 3.5.36 for the past 5 years, up to and including Linux kernel 5.13.0 . Since the changes proposed in this page are large, they have been divided into two patchsets. The first patchset has been sent to the Linux-scsi development list in many versions during the last months.

The following set of bullet points corresponds to the first patchset:

remove limit of 16 outstanding commands/requests per file descriptor. There is now a single xarray ("extensible array")) per sg file descriptor, with some requests marked as "inactive" which means they are ready for re-use in the future. In the updated driver there can be virtually an unlimited number of outstanding requests per file descriptor. To limit a badly programmed application from consuming all available memory, each request that uses data buffers bumps a byte counter which will reject further requests on that descriptor if a given count is exceeded. That defaults to 16 MiB and that value can be modified via an ioctl(2).

defer freeing of resources (specifically memory) of all request objects on one file descriptor until the last copy (if any) of that file descriptor is closed. This includes waiting for the completion of all associated "inflight" requests. This allows re-use of resources when multiple SCSI commands are sent via the same file descriptor. Associated request block layer and SCSI mid-level objects are freed as soon as practical (same as they were in 3.5.36).

extend the SG_IO ioctl(2) to accept the v4 interface based on struct sg_io_v4 found in <linux/bsg.h>

add SG_IOSUBMIT and SG_IORECEIVE ioctl(2)s for asynchronous (non-blocking) usage with the v4 interface.

add SG_IOSUBMIT_V3 and SG_IORECEIVE_V3 ioctl(2)s for asynchronous (non-blocking) usage with the v3 interface. These are designed to be replacements for the write() and read() system calls which have been used to submit and complete SCSI commands in interface versions 1, 2 and 3 of the sg driver.

reinstate the original functionality of the SG_FLAG_NO_DXFER request flag. If given then it will bypass user data copies between the user space and kernel buffers. This flag will not effect the user data transfers (usually DMA) between SCSI devices and kernel buffers controlled by this driver; they still take place. This level of control is needed for the "sharing" request feature discussed below.

're-purpose' previously unused 8 bytes at end of struct sg_scsi_id for the full SCSI LUN as an array of 8 bytes. Use anonymous union within the structure to avoid breaking existing code.

copy output obtained by 'cat /proc/scsi/sg/debug' to 'cat /sys/kernel/debug/scsi_generic/snapshot'. This is the initial debugfs support. Add snapshot_devs read-write attribute that can be used to limit which sg devices are shown in the snapshot.

add support for io_poll, also known as hipri and blk_poll with new SGV4_FLAG_HIPRI flag. Currently both sync and async usage is supported but restrictions may need to be added for async usage. This feature is "new ground" for the SCSI subsystem.

collect IO statistics in the same fashion as the st (SCSI tape) driver. The sysstat package already has a utility called tapestat that can be cloned for the sg driver. The cloned utility might be called sgstat.

bump the driver version number to 4.0.12

Probably the most important aspect of the first patchset not shown in the above list, is modernizing the driver code. Much of that driver code is over twenty years old and many things have changed in the kernel since then, especially in the area of multi-core machines and the code needed to handle the parallelism that introduces.

The following list of features are added in the second patchset:

add file descriptor sharing in which the user sets up a relationship between two sg driver file descriptors. This is used for two purposes: for request sharing (next bullet) and for allowing multiple requests submitted on a single file descriptor to be able to access another (shared) sg file descriptor.

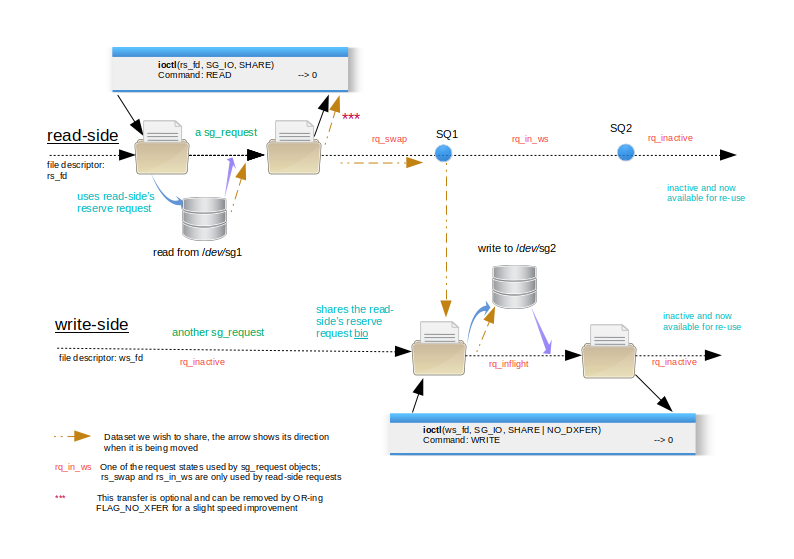

add request sharing to expedite copying. Large copies can be considered as a sequence of copy segments. Each copy segment is typically a READ from device X followed by a WRITE to device Y using the data just received from device X. Request sharing uses a single, "in-kernel" buffer shared by both the READ and the WRITE. There is an option to copy the READ data into the user space. Rather than copying, the same logic can be used for verifying one segment of data is the same as another segment of data (potentially on another disk). To do a verify, the SCSI WRITE command simply is replaced with the VERIFY command (with its BYTCHK field set to 1).

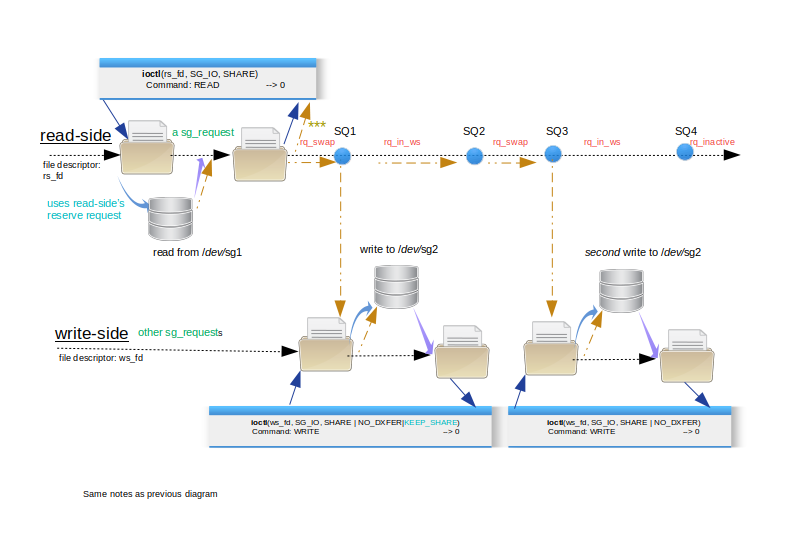

add single READ, multiple WRITE capability to the request sharing in the previous bullet. WRITEs may be to different devices (and are done sequentially and use the same in-kernel buffer). The WRITEs may be a subset of the read data and with the SGV4_FLAG_DOUT_OFFSET flag (value in sg_io_v4::spare_in), it may start at any byte offset in the read buffer. Also non data-moving SCSI commands may appear in this sequence. These commands may move data on the storage device, but not between the host computer and the storage device. Examples of potentially useful non data-moving SCSI commands are SYNCHRONIZE CACHE, PRE-FETCH and EXTENDED COPY.

add an extensible SG_SET_GET_EXTENDED ioctl(2) that takes a fixed size structure (96 byte). Some of that structure is currently not used to allow for later additions. It contains both integer (32 bit) and boolean fields. Multiple actions can be performed with a single call to ioctl( SG_SET_GET_EXTENDED).

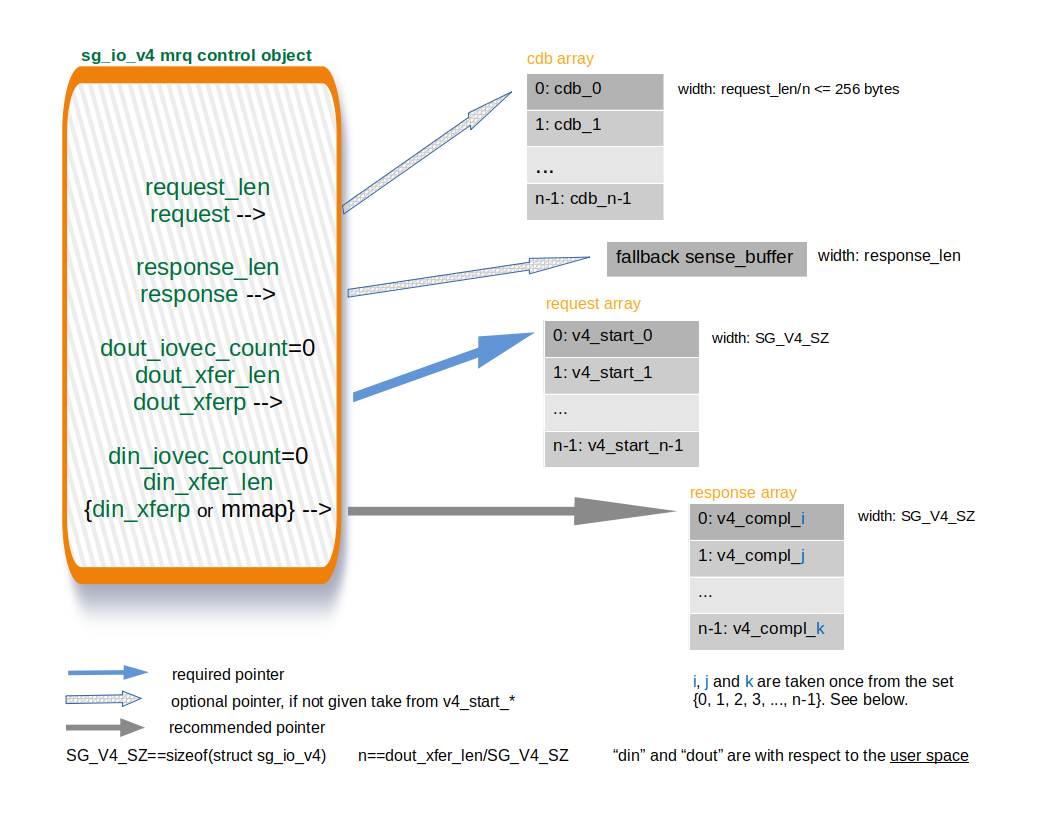

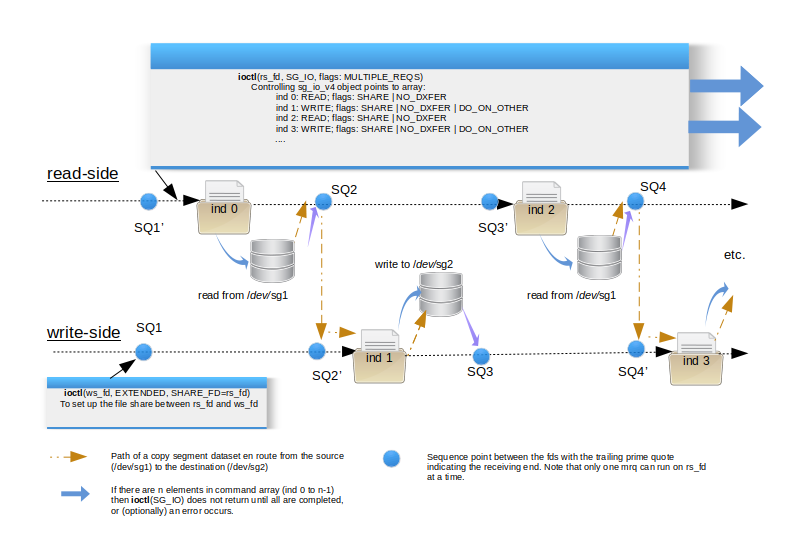

add multiple requests capability (mrq) in a single ioctl(SG_IO) or ioctl(SG_IOSUBMIT) invocation. Can be combined with request sharing. Multiple non-blocking requests can use an ioctl(SG_IOSUBMIT) call for submission while one or more ioctl(SG_IORECEIVE) calls can be used to receive the associated responses. Requests in the request array may use SGV4_FLAG_DO_ON_OTHER to take advantage of file descriptor sharing.

add a SGV4_FLAG_IMMED flag for ioctl(SG_IORECEIVE) or ioctl(|SG_IORECEIVE_V3) [or read(2)] calls. This enables non-blocking mode making it equivalent to setting O_NONBLOCK on the associated file descriptor. May also be used on the control object given for multiple requests to either ioctl(SG_IOSUBMIT) or ioctl(SG_IORECEIVE).

add bi-directional support with the sg V4 interface; async bidi uses new ioctl(2)s: SG_IOSUBMIT and SG_IORECEIVE, while sync bidi uses ioctl(SG_IO). Unfortunately bidi SCSI command support has been removed from the Linux kernel in lk 5.1 so it has been removed from this driver to allow it to merge post lk 5.1 . It is still available as a patch on this driver when in kernels prior to that support being removed.

use ioctl(SG_SET_GET_EXTENDED) to yield information that was previously "hidden" from the user space. For example return the sg device minor number (e.g. the "3" in /dev/sg3) of the sg device that owns the file descriptor the ioctl(2) is called on.

ioctl(SG_SET_GET_EXTENDED) can be used to change the segment size of scatter gather lists. In the v3 driver the segment size is fixed at 32 KB

add ioctl(SG_IOABORT) to abort an inflight command/request using its pack-id or tag. Also allow a single call to abort all inflight and un-submitted requests associated with a multiple requests invocation.

add logic for tag handling and keep existing pack_id (packet id) logic which plays a similar role

the shared variable blocking (svb) method of the multiple requests capability (mrq) is designed for large copy (and copy-like such as verify/compare) operations. While blocking the invoking user thread, it can issue a fixed number of READ commands (currently up to 8) asynchronously and as each read completes, its dependent WRITE command(s) is issued. There is a flag (SGV4_FLAG_ORDERED_WR) for additionally making sure that those WRITEs are issued in the same order as their READs, important when the destination of a copy is a ZBC (shingled) disk

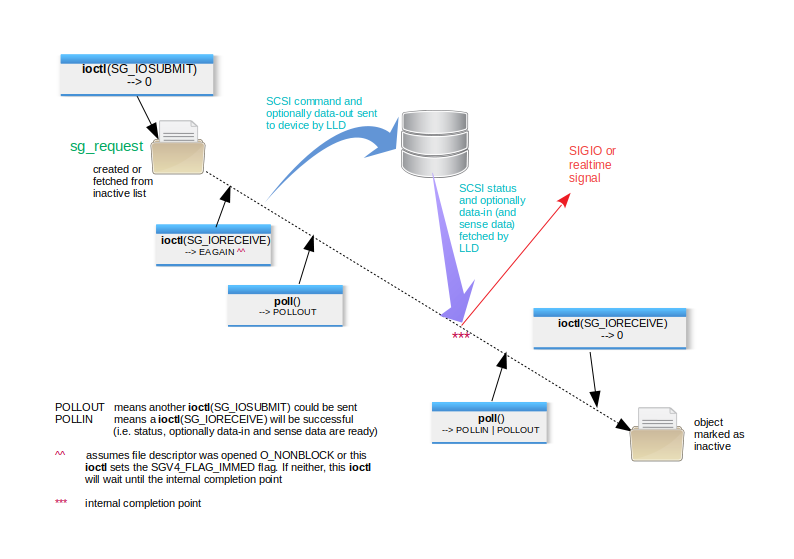

add support to pass a file descriptor generated by eventfd(2) to the driver via an ioctl(2). Another ioctl(2) can be used to remove the relationship between an eventfd and a sg file descriptor; this is optional and is needed, for example, if the user wants to associate a different eventfd to a sg file descriptor. Requests that have the SGV4_FLAG_EVENTFD in their flags increment the internal count maintained by the eventfd mechanism when that request reaches its internal completion point. See Figures 2 and 4.

while the order of responses (i.e. completions) picked up by a mrq ioctl(SG_IORECEIVE) can vary, the user can control where those responses are placed in response array. The user can also give a maximum number of responses that a mrq ioctl(SG_IORECEIVE) can receive.

use io_poll/hipri/blk_poll with multiple requests (mrq), especially svb. With svb blk_poll(spin<--false) calls can be interleaved between multiple request on the read-side and their pairs on the write-side. Apart from setting the flags on the control object, no extra work is required on the user space side.

bump the driver version number to 4.0.47

There are still some things to do:

allow some task management functions to be sent (when v4 interface's subprotocol field is 1)

extend debugfs support.

Again these points are given in bullet form and overlap somewhat:

warn (once per kernel run in the log) that the sg version 1 and version 2 interfaces are deprecated. Apart from version 3 and version 4 being better options, the version 1 and version 2 interfaces rely solely on the write(2) and read(2) system calls sending a mix of user data and metadata which is frowned upon by security folks

deprecate the use of the write(2) and read(2) system calls in the version 3 interface. Again with a warning message once per kernel run in the log. Using this driver upgrade supply ioctl(SG_IOSUBMIT_V3) and ioctl(SG_IORECEIVE_V3) as "drop in" replacements.

deprecate the use of 'echo 1 > /proc/scsi/sg/allow_dio' as a prelude to doing direct IO with this driver. Indirect IO remains the default and the driver continues to require the SG_FLAG_DIRECT_IO flag on each request that the user wants to use direct IO.

More to follow ....

SCSI and other storage related command sets send a lot of data to storage devices and receive as much if not more data back from those devices. That data can be subdivided into metadata and user data. The SCSI metadata sent to the storage device is the command which is sometimes referred to as the cdb (command descriptor block). The metadata received back from the device is a SCSI status byte and optionally a sense buffer of 18 or more bytes. More generic terms for those transfers are the request and the associated response. User data sent to the storage device is termed as data-out and user data received from the device is called data-in. These two terms are sometimes shorted to "dout" and "din". A few SCSI commands have both data-out and data-in transfers and are referred to as bi-directional (or bidi) while the majority of SCSI commands send user data either out or in, or transfer no user data. The SCSI commands sets define where user data will be placed in, or fetched from, the storage device but leave the details of where (and how) that user data is placed in the initiator (i.e. at the computer or local end) to the transport. Examples of transports are: iSCSI, SAS, SATA, FCP, SRP (Infiniband) and USB (UASP).

Another aspect of a SCSI pass-through is whether to map the sending of a request and receiving of the associated response onto a single system call or divide it into two parts. The single system call approach is termed here as blocking or synchronous. The two part approach is termed as non-blocking or asynchronous. Both approaches typically have an associated timeout. It is assumed that any user data transfer associated with the command will have taken place before a successful response is sent by the storage device. As a general rule the blocking approach is simpler to program while the non-blocking approach is more flexible, allowing code to do other chores while waiting for SCSI commands to complete.

Traditionally character device drivers in Unix have had a open(2), close(2), read(2), write(2), ioctl(2) interface to the user space. As well as those system calls this driver supports mmap(2), poll(2) and fasync(). The fasync() driver call is related to the fcntl(2) system call in which the file descriptor flags may be changed to add O_AYSNC (e.g. fcntl(SET_FL(flags | O_ASYNC)) ) . When considering how to send SCSI commands and associated data to a pass-through driver such as sg, it soon becomes evident that a structure will be needed to hold all the components. This is the same approach used by other operating systems that offer a SCSI pass-through interface. And in the almost 30 years that Linux has been in existence, it has had three (and a half) such structures.

The sg driver was present in Linux kernel 1.0.0 released in 1992. It supported just two ioctl(2)s at the time: SG_SET_TIMEOUT and SG_GET_TIMEOUT plus some "pass-through" ioctl(2)s that started with "SCSI_IOCTL_" that were in common with other ULDs (e.g. sd and st drivers) and implemented by the Linux SCSI mid-level. The only method of sending a SCSI command by this driver was with the async write(2) and read(2) system calls (that neglects counting the synchronous "pass-through" pass-through ioctl(2): SCSI_IOCTL_SEND_COMMAND implemented by the SCSI mid-level).

The version 1 SCSI pass-through interface only supported the asynchronous approach. This is its interface structure found in Linux kernel 1.0.0 (1992):

struct sg_header

{

int pack_len; /* length of incoming packet <4096 (including header) */

int reply_len; /* maximum length <4096 of expected reply */

int pack_id; /* id number of packet */

int result; /* 0==ok, otherwise refer to errno codes */

/* command follows then data for command */

};Only the pack_id field is found in all versions of the sg driver interface and its semantics remain the same. However there is an issue with the pack_id and the read(2) system call: pack_id is out-going data (to the driver in this case) while the rest of the data in that structure (with possibly the data-in from the storage device tacked onto the end) is in-coming data. This bidirectional data flow is abnormal for a read(2) system call which normally only expects in-coming data. It is of note that in the version 4 driver the new ioctl(2) to replace read(2) is ioctl(SG_IORECEIVE) and it is defined with the __IOWR() macro indicating both a write (from the user space) and a read (into the user space) data transfer.

The version 2 SCSI pass-through interface structure is really just a small extension of version 1:

struct sg_header {

int pack_len; /* [o] reply_len (ie useless), ignored as input */

int reply_len; /* [i] max length of expected reply (inc. sg_header) */

int pack_id; /* [io] id number of packet (use ints >= 0) */

int result; /* [o] 0==ok, else (+ve) Unix errno (best ignored) */

unsigned int twelve_byte:1;

/* [i] Force 12 byte command length for group 6 & 7 commands */

unsigned int target_status:5; /* [o] scsi status from target */

unsigned int host_status:8; /* [o] host status (see "DID" codes) */

unsigned int driver_status:8; /* [o] driver status+suggestion */

unsigned int other_flags:10; /* unused */

unsigned char sense_buffer[SG_MAX_SENSE];

};There are various shortcoming of the version 2 (and hence version 1) interface structure: the command (cdb), data-in, and/or data-out were tacked onto the end of the interface structure. The command length was not given explicitly but derived from the cdb making it difficult to support vendor specific commands.

Since many of the field and constant names are the same or related between the version 3 interface, the version 4 interface and the control object use of the version 4 interface, three colours are used to distinguish what is being referred to:

blue: associated with the version 3 interface

violet: associated with the version 4 interface and, in the case of a multiple requests (mrq) invocation, associated with each element in the request (and response ) array

dark green: associated with the control object of a multiple requests (mrq) invocation

In some cases a field name is in two colours (two tone) to indicate it applies to two of the above. Since very little reference is made to the version 2 and version 1 interface fields, those names are in the default text colour. The term flag (and as a verb) is used often in a generic sense in which case it is not coloured; the same applies to several other field names (e.g. timeout and duration).

The version 3 SCSI pass-through interface structure was introduced around 2000 and was a departure from versions 1 and 2:

typedef struct sg_io_hdr {

int interface_id; /* [i] 'S' for SCSI generic (required) */

int dxfer_direction; /* [i] data transfer direction */

unsigned char cmd_len; /* [i] SCSI command length */

unsigned char mx_sb_len;/* [i] max length to write to sbp */

unsigned short iovec_count; /* [i] 0 implies no sgat list */

unsigned int dxfer_len; /* [i] byte count of data transfer */

/* dxferp points to data transfer memory or scatter gather list */

void __user *dxferp; /* [i], [device -> *i or *i -> device] */

unsigned char __user *cmdp;/* [i], [*i] points to command to perform */

void __user *sbp; /* [i], [*o] points to sense_buffer memory */

unsigned int timeout; /* [i] MAX_UINT->no timeout (unit: millisec) */

unsigned int flags; /* [i] 0 -> default, see SG_FLAG... */

int pack_id; /* [i->o] unused internally (normally) */

void __user *usr_ptr; /* [i->o] unused internally */

unsigned char status; /* [o] scsi status */

unsigned char masked_status;/* [o] shifted, masked scsi status */

unsigned char msg_status;/* [o] messaging level data (optional) */

unsigned char sb_len_wr; /* [o] byte count actually written to sbp */

unsigned short host_status; /* [o] errors from host adapter */

unsigned short driver_status;/* [o] errors from software driver */

int resid; /* [o] dxfer_len - actual_transferred */

/* unit may be nanoseconds after SG_SET_GET_EXTENDED ioctl use */

unsigned int duration; /* [o] time taken by cmd (unit: millisec) */

unsigned int info; /* [o] auxiliary information */

} sg_io_hdr_t;Unused fields should be set to zero on input. It is recommended that the whole sg v3 structure is zeroed (e.g. with memset()) prior to a command request being built and submitted. Note that one of the constants: SG_DXFER_NONE, SG_DXFER_TO_DEV or SG_DXFER_FROM_DEV should be placed in the dxfer_direction field and they all have negative values (-1, -2 and -3 respectively). This is used to differentiate between the v1/v2 interface (which has reply_len in that position) and this (v3) interface.

The version 3 sg driver supported the version 1, 2 and 3 interface structures. It introduced the blocking ioctl(SG_IO) while keeping the write(2)/read(2) technique for asynchronous usage. The blocking ioctl(SG_IO) has also been implemented in the block layer for SCSI block devices (e.g. /dev/sdb) and in other drivers such as the SCSI tape driver (st). So at this time the version 3 interface structure together with ioctl(SG_IO) is the most used SCSI pass-through in Linux. Over time there has been a transfer of functionality from the write(2) and read(2) system calls to various ioctl(2)s. Using the write(2) and read(2) system calls in the way that this driver does is frowned upon by the Linux kernel architects. Even though adding new ioctl(2)s is also discouraged, two new ioctl(2)s were proposed in this post by Linux architect (L. Torvalds). Those two ioctl(2)s plus two closely related ioctl(2)s have been implemented in this upgrade.

Some weaknesses of the version 3 interface were that it had no provision for bidirectional commands and that it included pointers. Pointers in interface structures are problematic because they change size when moving from 32 bit to 64 bit architectures (and that was a big issue at the time). Also the version 3 interface was too SCSI command set specific and could not easily pass related protocols such as SCSI task management functions (TMFs) or the SAS Management Protocol (SMP). So around 2005 the version 4 SCSI pass-through interface structure was introduced:

struct sg_io_v4 {

__s32 guard; /* [i] 'Q' to differentiate from v3 */

__u32 protocol; /* [i] 0 -> SCSI , .... */

__u32 subprotocol; /* [i] 0 -> SCSI command, 1 -> SCSI task

management function, .... */

__u32 request_len; /* [i] in bytes */

__u64 request; /* [i], [*i] {SCSI: cdb} */

__u64 request_tag; /* [i] See Table 1 entry */

__u32 request_attr; /* [i] {SCSI: task attribute} */

__u32 request_priority; /* [i] {SCSI: task priority} */

__u32 request_extra; /* [i->o] See Table 1 entry */

__u32 max_response_len; /* [i] in bytes */

__u64 response; /* [i], [*o] {SCSI: (auto)sense data} */

/* "dout_": data out (to device); "din_": data in (from device) */

__u32 dout_iovec_count; /* [i] 0 -> "flat" dout transfer else

dout_xfer points to array of iovec */

__u32 dout_xfer_len; /* [i] bytes to be transferred to device */

__u32 din_iovec_count; /* [i] 0 -> "flat" din transfer */

__u32 din_xfer_len; /* [i] bytes to be transferred from device */

__u64 dout_xferp; /* [i], [*i -> device] */

__u64 din_xferp; /* [i], [device -> *i] */

__u32 timeout; /* [i] units: millisecond */

__u32 flags; /* [i] bit mask. See Table 1 entry and Table 2 */

__u64 usr_ptr; /* [i->o] unused internally */

__u32 spare_in; /* [i] See Table 1 entry */

__u32 driver_status; /* [o] 0 means ok */

__u32 transport_status; /* [o] 0 means ok */

__u32 device_status; /* [o] {SCSI: command completion status} */

__u32 retry_delay; /* [o] {SCSI: status auxiliary information} */

__u32 info; /* [o] See Table 1 entry and Table 3 */

__u32 duration; /* [o] time to complete, in milliseconds (or nanoseconds) */

__u32 response_len; /* [o] bytes of response actually written */

__s32 din_resid; /* [o] din_xfer_len - actual_din_xfer_len */

__s32 dout_resid; /* [o] dout_xfer_len - actual_dout_xfer_len */

__u64 generated_tag; /* [o] see Table 1 entry */

__u32 spare_out; /* [o] zero placed in this field */

__u32 padding;

};Again, unused fields should be set to zero on input. The __s32 and __u64 types could be replaced by the (more) standard int32_t and uint64_t C types. The pointers are still there but are placed in fixed length (64 bit) unsigned integers. All the other integer sizes are fixed so that the structure is the same size on 32 and 64 bit architectures. Between around 2005 and this upgrade, the version 4 interface structure was only used by the bsg driver which explains why its interface structure is found in the <linux/bsg.h> header file. The version 2 and version 3 interface structures are found in the <scsi/sg.h> header file.

The following table has a row for each field in the version 4 interface structure. In the second column is the corresponding field name from the version 3 interface structure. A field name in brackets implies a close, but not exact match, see the notes in the third column. No entry in the second column implies there is not matching field in the version 3 interface structure.

v4 interface field |

corresponding to in v3 interface |

Notes |

|---|---|---|

guard [io] |

[interface_id] |

Both fields are the first in their respective structures and are assumed to be 32 bits each. The guard for v4 is an ASCII 'Q' stored as an unsigned 32 bit integer. The interface_id is an ASCII 'S' stored as a 32 bit integer. The difference between signed and unsigned is not important in this case. |

protocol [io] |

|

A value of '0' (a 32 bit integer) is used for all SCSI protocols |

subprotocol [io] |

|

A value of '0' for SCSI commands sets based on SPC. The value '1' is reserved for SCSI Task Management Functions [TMFs] which are not implemented at this time. |

request_len [i] |

cmd_len |

Number of bytes in SCSI command. Since cmd_len is an unsigned char (i.e. an 8 bit byte) the largest number it can represent is 255 in the v3 interface. |

request [i, *o] |

cmdp |

Like all pointers in the v4 interface, request is a pointer value placed in a 64 bit unsigned integer. This is done to make the size of v4 interface constant (as long as pointers (by C definition able to fit in unsigned long) fit in 64 bits). Conversely, cmdp is a pointer so its size will very between 32 and 64 bit systems. |

request_tag [i] |

|

Used if ioctl(SG_SET_FORCE_PACK_ID) third argument points to non-zero integer and SG_CTL_FLAGM_TAG_FOR_PACK_ID is set via the extended ioctl(2) on this file descriptor. This value is acted upon by ioctl(SG_IORECEIVE) and ioctl(SG_IOABORT). The generated_tag is only written when ioctl(SG_IOSUBMIT) completes. So the user space code needs to copy the contents of generated_tag to this field to match by that tag value in a call to ioctl(SG_IORECEIVE). |

request_attr [i] |

|

not currently used |

request_priority [i] |

|

when the SGV4_FLAG_REC_ORDER flag is set then the value in this field on submission is held with the request internally. If after completion this request is read using ioctl(SG_IORECEIVE) with the SGV4_FLAG_MULTIPLE_REQS flag set then the value is used as an index into the response array to place its response. |

request_extra [io] |

pack_id |

A packet identifier of -1 is taken as a wildcard (i.e. match any). Twos complement is assumed for the 32 bit unsigned request_extra so -1 becomes 0xffffFFFF . Also used by ioctl(SG_IOABORT) for identification. In the v3 interface the submitted pack_id is placed in the async completion object. In the v4 interface the submitted request_extra is placed in the async completion object. Both of these fields only change the behaviour of a request if ioctl(SG_SET_FORCE_PACK_ID) is active, otherwise they are just carried through to the completion object which is useful (along with usr_ptr) in async usage. |

max_response_len [i] |

mx_sb_len |

No more than this number of sense bytes will be written out starting at where response points. |

response [i, {*i},o] |

sbp |

A pointer to the sense buffer. Only used when the SCSI device yields sense data for the associated command. In the non-blocking case, the pointer value given to ioctl(SG_IOSUBMIT) is used and any value given to ioctl(SG_IORECEIVE) is ignored and when that ioctl(2) returns this field will contain the original value in it. The note given for request applies here also. |

dout_iovec_count [i] |

[iovec_count] |

If this field is zero then dout_xferp (or dxferp) points to user data to be written from the host to the storage device. If this field is non-zero, then it's the number of elements in the scatter gather list pointed to by dout_xferp (or dxferp). Kernels around lk 5.10 limit this value to UIO_MAXIOV which is 1024. |

dout_xfer_len [io] |

[dxfer_len] |

This field is the number of bytes pointed to by dout_xferp (or dxferp). The data is (or will be) moved from the host to the SCSI device (e.g. a SCSI WRITE command) |

din_iovec_count [i] |

[iovec_count] |

If this field is zero then din_xferp (or dxferp) points to user data to be written from the host to the storage device. If this field is non-zero, then it's the number of elements in the scatter gather list pointed to by din_xferp (or dxferp). Kernels around lk 5.10 limit this value to UIO_MAXIOV which is 1024. |

din_xfer_len [io] |

[dxfer_len] |

This field is the number of bytes pointed to by din_xferp (or dxferp). The data is (or will be) moved from the SCSI device to the host (e.g. a SCSI READ command). |

dout_xferp [i, *o] |

[dxferp] |

If the dout_iovec_count field is zero then this field points to the first byte to be transferred from the user space memory to the storage device. All the other bytes (indicated by dout_xfer_len) should follow the first byte with no gaps. If the dout_iovec_count field is non-zero then this field points to a scatter gather list which the driver will use to output data from the user space to the storage device. The note given for request applies here also. |

din_xferp [i, *i] |

[dxferp] |

If the din_iovec_count field is zero then this field points to the first byte to be transferred from the storage device to the user space memory. All the other bytes (indicated by din_xfer_len) should follow the first byte with no gaps. If the din_iovec_count field is non-zero then this field points to a scatter gather list which the driver will use to read data from the storage device to the user space. The note given for request applies here also. |

timeout [i] |

timeout |

This is the number of milliseconds the SCSI mid level will wait for a command to finish before it attempts to abort that command. If zero is given, a driver default of SG_DEFAULT_TIMEOUT (60,000 or 60 seconds) is chosen. Several SCSI commands (e.g. FORMAT UNIT with the IMMED bit cleared on a 10 Terabyte disk (hard disk or SSD)) take a lot longer than that. User manuals for disks often indicate how long such commands will take. |

flags [io] |

flags |

This is a 32 bit integer in which the lower numbered bit positions are boolean flags. The available settings are listed in the <include/uapi/scsi/sg.h> header file. They start with SG_FLAG_ or SGV4_FLAG_ . See Table 2 below. The two tone flags field indicates either the v3 flags field or the v4 flags field. |

usr_ptr [io] |

usr_ptr |

The driver does not use this value. Whatever pointer value that is placed in usr_ptr will be sent back to the user space after the command has completed. This may be useful in async (non-blocking) code when the submission and completion are separated (e.g. in different threads). Whenever multiple submissions are outstanding, the order of completion is up to the storage device. The note given for request applies here also. |

spare_in [i] |

|

when the SGV4_FLAG_DOUT_OFFSET flag is set, this field holds the data-out (dout) byte offset. It is mainly used on the write-side (typically a WRITE command) of a share request. This byte offset is applied to the in-kernel buffer from the preceding read-side command and the copy (DMA) out to the write-side device will be dout_xfer_len bytes long. If this offset plus dout_xfer_len will exceed the in-kernel buffer size then the request will fail with an errno of E2BIG. |

driver_status [o] |

driver_status |

This value is output by the driver. Zero indicates no errors. These are not so much sg driver errors as errors from the SCSI mid-level. The possible values are listed in the <include/scsi/scsi.h> header and they start with DRIVER_ . If driver_status is non-zero then SG_INFO_CHECK is set in the info field. |

transport_status [o] |

host_status |

This value is output by the driver. Zero indicates no errors. These are not so much sg driver errors as errors from a SCSI Low Level Driver (LLD) typically controlling a Host Bus Adapter (HBA). The possible values are listed in the <include/scsi/scsi.h> header and they start with DID_ . If transport_status is non-zero then SG_INFO_CHECK is set in the info field. |

device_status [o] |

status |

This value is output by the driver. Zero indicates no errors. This is the 8 bit SCSI Status returned in response to all SCSI commands (unless they time out). The possible values are listed in the <include/scsi/scsi_proto.h> header and they start with SAM_STAT_ . The SCSI status SAM_STAT_CONDITION_MET is non-zero but is not an error; any other non-zero value is an error and will cause SG_INFO_CHECK to be set in the info field. |

retry_delay [o] |

|

not currently used. Zero is output by the driver in this field. |

info [o] |

info |

This value is output by the driver. This value contains boolean flags OR-ed together. The possible flags are listed in the <include/uapi/scsi/sg.h> and they start with SG_INFO_ . See Table 3. |

duration [o] |

duration |

This value is output by the driver. It is the time between when a command is issued to the block layer until the internal completion point occurs. By default the unit is milliseconds, however if SG_CTL_FLAGM_TIME_IN_NS is set in the extended ioctl(2) on this file descriptor then the unit is nanoseconds. |

response_len [o] |

sb_len_wr |

This value is output by the driver. This is the length of the sense buffer (i.e. the response) that is returned from the storage device. This usually indicates something has gone wrong with the command. A value of 0 indicates there is no sense buffer and the storage device has most likely successfully completed the command. Due to caches in storage devices WRITEs may initially report success and later report a "deferred error". If response_len is greater than zero then SG_INFO_CHECK is set in the info field. |

din_resid [o] |

resid |

This value is output by the driver. The value is din_xfer_len less the number of bytes actually transferred in from the storage device. |

dout_resid [o] |

|

This value is output by the driver. The value is dout_xfer_len less the number of bytes actually transferred out to the storage device. |

generated_tag [o ***] |

|

This value is output by the driver. Zero will be placed in this field unless the SGV4_FLAG_YIELD_TAG flag is one of the flags set in the flags field in a call to ioctl(SG_IOSUBMIT). In this case, the block layer's tag value is placed there. |

spare_out [o] |

|

Zero is placed in this field except for the control object of multiple requests (see below). |

Table 1: sg v4 interface structure compared with v3

Unused fields should be set to zero on input. It is recommended that the whole sg_io_v4 structure is zeroed (e.g. with memset() ) prior to a command request being built and submitted. In the first column of the above table, the "i" and "o" indications within the square brackets are in some cases expansions on what is shown in the sg_io_v4 structure definition comments above the table. Those with "i" should be set (or left as zero) before a call to ioctl(SG_IO) or ioctl(SG_IOSUBMIT), Those with "o" will in some cases be set by this driver and can be checked after a call to ioctl(SG_IO) or ioctl(SG_IORECEIVE). The "*o" indicates a pointer being used as the source starting address to copy data from the user space to the driver and often on to a storage device. The "*i" indicates a pointer being used as the destination starting address to copy data from a storage device into the user space. This level of detail becomes more important when a request is split between a ioctl(SG_IOSUBMIT) and a ioctl(SG_IORECEIVE). Some input values (e.g. din_xfer_len) are copied to the output as a convenience (e.g. to help in this calculation: (din_xfer_len - din_resid) which is the number of bytes actually read). The "[o ***]" indication notes the special case of generated_tag whose value is output after ioctl(SG_IOSUBMIT), all other output values (and generated_tag itself) are output after ioctl(SG_IORECEIVE) has completed.

The square brackets in the second column of the above table implies the v3 interface field is similar to, but not exactly the same as, the v4 interface field.

Note that multiple requests (in one invocation) use an instance of the same sg_io_v4 structure as its control object. Most fields have a different, but related, meaning when they are in a control object. A control object is distinguished by having the SGV4_FLAG_MULTIPLE_REQS flag set. Multiple requests are described in a later section.

The following table lists the flag values that can be OR-ed together and placed in the flags field. The are listed in numerical order of their values (shown in hex within square brackets). Where two names map to the same value, the preferred name is in boldface. The older flags (i.e. inherited from the version 3 driver) tend to have lower values.

Flag name [hex_value] |

Description |

|---|---|

SG_FLAG_DIRECT_IO [0x1] SGV4_FLAG_DIRECT_IO |

The default action of this driver is to "bounce" data through kernel buffers en route to or from the user space. This is sometimes referred to as "indirect" IO. This is obviously inefficient but is very flexible. Among other reasons, memory in a user space needs to be "pinned" during a direct IO data transfer because a user space process can be killed at any time (e.g. by a superuser or the OOM killer) seen from the driver's perspective. Another issue with direct IO is that the user space buffer must meet whatever alignment requirements the storage HBA imposes. Most alignment problems can avoided by the user allocating buffers with memalign(_SC_PAGESIZE, num_blks * lbs) where lbs is the logical block size (in bytes) of the storage device. |

SG_FLAG_UNUSED_LUN_INHIBIT [0x2] |

Ignored. This is a remnant from SCSI-2 in which bits 7, 6 and 5 of byte 1 of many cdb_s carried the 3 bit LUN value. If SCSI 2 equipment is being used, the cdb can be altered explicitly to carry the LUN address. |

SG_FLAG_MMAP_IO [0x4] SGV4_FLAG_MMAP_IO |

This is another "direct" IO capability in which the user space buffers are obtained by mmap(2) system calls. The driver arranges for user data to be transferred directly between the user space and the storage device. Since the driver provides the buffer pointer returned by mmap(2), then it is guaranteed to meet any alignment (and page pinning) requirements. This flag cannot be given with SGV4_FLAG_DIRECT_IO as they are basically different ways of doing the same thing. Note that request sharing is a faster way of doing a disk-to-disk copy compared to using this flag for several reasons, one being that with this flag two "mmap-ed" buffers must be used (for the source and destination of the copy) and the user code must copy the data between those two buffers. See section on mmap below. |

SGV4_FLAG_YIELD_TAG [0x8] |

This flag may be used with ioctl(SG_IOSUBMIT). If that ioctl(2) does not return an error then a tag value will be placed in the generated_tag field of the object pointed to by the ioctl's third argument. If the tag value (obtained from the block subsystem) is not available then -1 (or 0xffffFFFF) is placed in the generated_tag field. |

SG_FLAG_Q_AT_TAIL [0x10] SGV4_FLAG_Q_AT_TAIL |

This will place the current request/command at the tail (end) of the block system's queue of commands for the current device. For historical reasons, the driver default is to place the current request/command at the head (start) of the block system's queue. One rationale for this as that admin commands (e.g. INQUIRY and MODE SELECT) show take precedence over normal (data moving) commands. The driver default can first be overridden on a per file descriptor basis with the extended ioctl(SG_CTL_FLAGM_Q_TAIL). Then the per device setting can be overridden on a per request/command setting with this or the following flag. |

SG_FLAG_Q_AT_HEAD [0x20] SGV4_FLAG_Q_AT_HEAD |

This will place the current request/command at the head (start) of the block system's queue of commands for the current device. See the SGV4_FLAG_Q_AT_TAIL flag for more details. |

SGV4_FLAG_DOUT_OFFSET [0x40] |

this flag is only active with the v4 interface and reads the "dout" (typically a WRITE command) byte offset from sg_io_v4::spare_in . The request with this flag is assumed to be the write-side request (e.g. a WRITE command) following a read-side request that has populated an in-kernel buffer maintained by the driver. That byte offset will become the starting address within that in-kernel buffer for the DMA out to the write-side device. |

SGV4_FLAG_EVENTFD [0x80] |

Assuming the eventfd has been set up on this file descriptor, then at the completion of the associated request that eventfd is signalled. This increments the internal count that can be accessed with read(eventfd, buff, 8). See ioctl(sg_fd, SG_SET_GET_EXTENDED( SG_SEIM_EVENTFD), ...) below, for how the user space passes an eventfd to this driver. |

SGV4_FLAG_COMPLETE_B4 [0x100] ^^ |

This flag is only permitted within an array of requests given with a multiple request invocation (mrq). It instructs the driver to wait for the completion of the current request before ("B4") submitting the next request in the array of requests. |

SGV4_FLAG_SIGNAL [0x200] ^^ |

For v3 headers, this flag is ignored. For version 4 this flag is only permitted within an array of requests given with a multiple request invocation. |

SGV4_FLAG_IMMED [0x400] |

This flag uses "IMMED" in the same fashion as various SCSI commands (e.g. FORMAT UNIT) do, where it means: check the request and if it is good, start whatever and then return as promptly as possible. The significant part here is that it doesn't wait for whatever to complete. It can be used with the version 3 and 4 interfaces, including the mrq control object. |

SGV4_FLAG_HIPRI [0x800] |

The associated request will have REQ_HIPRI set when issued to the block layer ***. The completion handling will use blk_poll() instead of waiting for an event or classic polling (i.e. via the Unix poll(2) or Linux epoll(2) system calls). For *** and further details see the section on io_poll/blkpoll below. |

SGV4_FLAG_STOP_IF [0x1000] |

This flag is only permitted in the control object with some methods of multiple request invocation. Basically if an internal completion point reports an error then further submissions will not occur. All submissions prior to the one with the detected error will be processed as normal and that may require action by the user space code. In the response array, all requests that have been processed at the internal completion point have SG_INFO_MRQ_FINI OR-ed into their info field |

SGV4_FLAG_DEV_SCOPE [0x2000] |

this flag is currently only used by ioctl(SG_IOABORT). Without this flag that ioctl(2) will only look at the given file descriptor for a match on pack_id or tag. In practice a request may need to be aborted when a call like ioctl(SG_IO) takes an unreasonable amount of time to finish, suggesting that something is wrong. Often the file descriptor associated with the ioctl(SG_IO) is locked up in a process that is not responding. When this flag is given, after the ioctl(SG_IOABORT)'s own file descriptor is checked first and if no match is found, then all other sg file descriptors belonging to the same sg device (hence "device scope") are checked and the first request found matching the given pack_id or tag is aborted. Also has the same action with a mrq abort. Use with care. |

SGV4_FLAG_SHARE [0x4000] |

This flag indicates request sharing. Such requests usually occur in pairs. It can only be used with a file descriptor which is either the read-side, or the write-side of a file share which has already been set up. If it is the read-side then the command must be a READ or READ-like SCSI command (i.e. gets data-in from the storage device). If it is the write-side then the command must be a WRITE or WRITE-like SCSI command (i.e. sends data-out to the storage device). |

SGV4_FLAG_DO_ON_OTHER 0x8000 ^^ |

This flag is only permitted within an array of requests given with a mrq invocation. |

SG_FLAG_NO_DXFER [0x10000] SGV4_FLAG_NO_DXFER |

With indirect IO, data is "bounced" through a kernel buffer as it passes from user space memory to the storage device (or vice versa). This flag instructs the driver not to do the portion of the copy between the kernel buffers and user memory. There are several cases where this is useful. It is used on the write side of request sharing because the data to be written is already sitting in that kernel buffer (placed there by the preceding READ). Another case is when data is mirrored on two disks, it only needs to be actually read back from one of the disks, but it may be a good idea to read it back from the other disk at the same time to see if a MEDIUM ERROR is reported (which would indicate the mirror is no longer safe). If the data is not going to be compared, then the second READ could use this flag. |

SGV4_FLAG_KEEP_SHARE [0x20000] |

the default action of this driver is to "free up" a reserve request buffer after the write-side request (typically a WRITE command) in a read-write request share. When this flag is applied to a write-side request, than it can be followed by another write-side request which will use the same in-kernel buffer from the preceding read-side request. The last write-side request (e.g. in a READ-WRITE-WRITE-WRITE sequence) should not have this flag set. The last request in a multiple request array must not have this flag set (or an -ERANGE error will result). |

SGV4_FLAG_MULTIPLE_REQS [0x40000] |

This flag can only be used on a sg v4 interface object and indicates that this object is a control object for multiple requests (mrq). |

SGV4_FLAG_ORDERED_WR [0x80000] |

Only used by shared variable blocking method. Requires that write-side commands are issued in the same order as the read-side commands they are paired with. Read-side commands are issued in the order they appear in the command array which is supplied by the user. |

SGV4_FLAG_REC_ORDER [0x100000] |

This flag can be used either on a control object or a normal sg v4 object (hence the two colours). Allows the order in the response array to be specified. There is a later subsection on the use of this flag. |

SGV4_FLAG_META_OUT_IF [0x200000] |

Only used with multiple requests (mrq) control object. When given the response array is only output to the user space if there is something abnormal to report. |

Table 2: The flags field in the v3 and v4 interface structures

The constants marked with "^^" can only be used within the request array given to a multiple requests (mrq) invocation. Further, those constants ending with "_ON_OTHER" are only valid if a file share has already been established with the file descriptor in the first argument of the ioctl(2). The flags field is always an input to the driver; the corresponding output field used by the driver to communicate with the user space when an ioctl(2) is finished is the info field.

The driver writes the info field to the user space as part of the sg version 3 and 4 interface objects. This occurs after ioctl(SG_IO), ioctl(SG_IORECEIVE) and ioctl(SG_IORECEIVE_V3). The info field conveys additional information back to the user space not necessarily associated with error conditions . Like the flags field, the info field contains flag values that may be OR-ed together. They are listed in the table below in numerical order.

info field [hex value] |

Notes |

|---|---|

SG_INFO_CHECK [0x1] |

an error has probably occurred, check the other error fields and the sense buffer (if its returned length > 0) |

SG_INFO_DIRECT_IO [0x2] |

even when the SGV4_FLAG_DIRECT_IO flag is given, if certain condition are not met then the simpler indirect IO may be performed instead. When this info field is received it's a case of: you asked for it, you got it. If this flag is not present then indirect (or mmap-ed) IO took place. |

SG_INFO_DEVICE_DETACHING [0x8] |

This should not happen very often but when it does, it means what it says. |

SG_INFO_ABORTED [0x10] |

This request/command has been aborted. If it was a data-in type command then no data is returned to the user space. Seen from the driver's perspective it is indeterminate whether the device executed the command or not. If the aborted command changed the state of the SCSI device (e.g. with MODE SELECT) then the user should issue further commands to check what happened on the device. |

SG_INFO_MRQ_FINI [0x20] |

this command, which is assumed to be one of an array of sg_io_v4 objects given in a multiple requests invocation (mrq), has completed processing. This is important to know when there are errors or mrq abort is called. |

Table 3: The info field in the v3 and v4 interface structures

The last three info values are new in this driver update.

The Linux SCSI subsystem is made up of three parts: several upper level drivers (ULDs), one mid level, and multiple low level drivers (LLDs). The upper level drivers are divided up by the type of SCSI device: for disks the ULD is sd, for tape (drives) the ULD is st, for DVD/CDROMs the ULD is sr, for enclosures the ULD is ses. In most contexts the sg driver is considered a ULD, however in one context: when a LLD or user sets the no_uld_attach flag (see include/scsi/scsi_device.h), then that device is attached (i.e. receives an sg device node of the form /dev/sg<n> (where <n> is an integer starting at 0)) to the sg driver but to no other ULD. The SCSI devices attached to the sg driver may be thought of as the union of the devices from all the other ULDs plus any devices that don't have a type specific ULD supported such as a PROCESSOR DEVICE type used for managing enclosures using the SAFTE protocol. The mid-level maintains interfaces for both ULDs and LLDs and provides services such as device discovery, device teardown (e.g. at shutdown or suspend) and error processing. LLDs typically manage SCSI hardware (often call Host Bus Adapters (HBAs)) or bridge to another protocol stack (e.g. USB attached SCSI (UAS, also known as UASP in USB standards)).

One difficulty faced when using this driver is knowing what is the mapping between the type-specific ULD (e.g. /dev/sdc) on the corresponding sg driver device name (e.g. /dev/sg2). The information to solve this can be found in sysfs (under the /sys/class directory) but can be a little tedious to follow. The lsscsi utility shows this information in tabular form but does not show sg devices by default. To see the type specific ULD device name and the corresponding sg device name on the same lines use 'lsscsi -g'. The author often uses 'lsscsi -gs' which additionally shows the device size (if it has a storage size). For mapping between the primary ULD device name and the sg device name with the least clutter 'lsscsi -gb' may help. Note that there is no ioctl() provided by this driver to show that mapping; it has been proposed but rejected as an encapsulation violation. In the following example /dev/sg3 and /dev/sg4 are actually enclosures but the ULD module to support them (i.e. ses) has not been built into the kernel; the last entry is a NVMe namespace which does not have a corresponding sg device name hence the trailing "-".

# lsscsi -gb [4:0:0:0] /dev/sda /dev/sg0 [6:0:0:0] /dev/sdb /dev/sg1 [6:0:1:0] /dev/sdc /dev/sg2 [6:0:2:0] - /dev/sg3 [6:0:3:0] - /dev/sg4 [7:0:0:0] /dev/sdd /dev/sg5 [7:0:0:1] /dev/sde /dev/sg6 [N:0:1:1] /dev/nvme0n1 -

One can verify the equivalence between a primary ULD device and a sg device by issuing a SCSI INQUIRY command for the Device Identification VPD page [0x83] to both and comparing the results. All SCSI devices (and those that translate the SCSI command set) support ioctl(SG_IO) version 3 interface. For example ioctl(open("/dev/sdd", O_RDWR), SG_IO, &a_sg_io_hdr)) and ioctl(open("/dev/sg5", O_RDWR), SG_IO, &b_sg_io_hdr)) can be issued with appropriate a_sg_io_hdr and b_sg_io_hdr objects; then the standard library memcmp() function can be used to compare the data-in buffers returned by those ioctl()s.

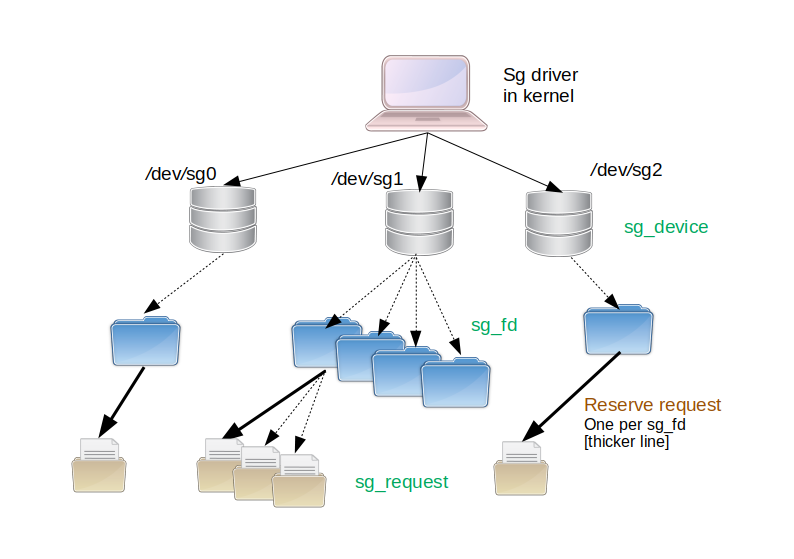

Moving onto the sg driver itself: nothing much has changed in the overall architecture of the sg driver between version 3 (v3) documented earlier, and version 4 (v4). Having a pictorial summary of the driver's object tree may help later explanations:

Figure 1: sg driver object tree

The sg driver is shown with a laptop icon at the top of the object tree. The arrow end of solid lines shows objects that are created automatically or by actions outside the user interface to the sg driver. So the disk-like icons created at the second level come from the device scanning logic undertaken by the SCSI mid-level driver in Linux. Note that there are SCSI devices other than disks such as tape units and SCSI enclosures. Also note that not all storage devices in Linux use the SCSI subsystem, examples of these are NVME SSDs and SD cards that are not attached via USB. The type of SCSI device objects is sg_device (and in the driver code they appear as objects of C type 'struct sg_device'). Even though the sg driver's implementation is closely associated with the block subsystem, the sg driver's device nodes are character devices in Linux (e.g. /dev/sg1). The nodes are also known as character special devices.

At the third level are file descriptors which the user creates via the open(2) system call (e.g. 'sg_fd = open("/dev/sg1", O_RDWR);') . Various other system calls such as close(2), write(2), read(2), ioctl(2) and mmap(2) can use that file descriptor made by open(2). The file descriptor will stay in existence until the process containing the code that opened it exits or the user closes it (e.g. 'close(sg_fd);'). A dotted line is shown from the "owning" device to each file descriptor in order to indicate that it was created by direct user action via the sg interface. The type of file descriptor objects is sg_fd. BTW most system calls have "man pages" and the form open(2) indicates that there is a manpage in section 2 describing the open system call. Common manpage sections are "1" for commands and utilities (e.g. 'man 1 cp' explaining the copy command); "2" for system calls; "3" for functions found in system libraries (e.g. 'man 3 snprintf') and "8" for system administration commands.

At the lowest level are the sg_request objects each of which may carry a user provided SCSI command to the target device which is its grandparent in the object tree. These requests are then sent via the block and SCSI mid-level to a Low Level Driver (LLD) and then across a transport (with iSCSI that can be a long way) to the target device (e.g. a SSD). User data that moves in the same direction as the request is termed as "data-out" and the SCSI WRITE command is an example. In nearly all cases (one exception is a command timeout) a response traverses the same route as the request, but in the reverse direction. Optionally it may be accompanied by user data which is termed as "data-in" and the SCSI READ command is an example. Notice that a heavy (thicker) line is associated with the first request of each file descriptor; it points to a reserve request (in version 3 (and earlier) sg documentation this was referred to as the reserve buffer). That reserve request is built after each file descriptor is created and before the user has a chance to send a SCSI command/request on that file descriptor. This reserve request was originally created to make sure CD writing programs didn't run out of kernel memory in the middle of a "burn". That is no longer a major concern but the reserve request (and its associated buffer) has found other uses: for mmap-ed and direct IO. So when the mmap(2) system call is used on a sg device, it is the associated file descriptor's reserve request buffer that is being mapped into the user space.

The lifetime of sg_request objects is worth noting. When a sg_request object is active (inflight is the term used in this driver) it has both an associated block request object and a SCSI mid-level object. They have similar roles and overlap somewhat. However once the response is received (i.e. the internal completion point in the next diagram) then the block request and the SCSI mid-level objects are freed up. The sg_request object lives on, along with the data carrying part of the block request called the bio as that may be carrying "data-in" that has yet to be delivered to the user space. The process being described here is indirect IO and is a two stage process. For data-in that will be first DMA-ed from the target device into kernel memory, typically under the control of the LLD; the second stage is copying from that kernel memory to the user space, under the control of this driver. Even after the user has fetched the response and any data-in, the sg_request continues to live. [However once any data-in has been fetched the block request bio is freed.] The sg_request object is then marked inactive. There is one xarray per sg file descriptor. That xarray contains references (pointers) to sg_request objects. The next time a user tries to send a SCSI command through that file descriptor, its xarray will be checked to see if any inactive sg_request objects has a large enough data buffer suitable for the new request; if so that sg_request object will be (re-)used for the new request. Only when the user calls close(2) on that file descriptor will all the requests in the fd's xarray be truly freed. Note that in Unix, and thus Linux, the OS guarantees that it will call the close(2) command (called release() in the kernel and sg_release() in this driver) in this driver for every file descriptor that the user has opened, irrespective of what the user code does. This is important because processes can be shut down by signals from other processes or drivers, segmentation violations (i.e. bad code) or the kernel's OOM (out-of-memory) killer. Immediately after a successful open(<a_sg_device>) system call, the new file descriptor has a xarray with one entry marked as inactive which is a reserve request.

The above description is setting the stage for a newly added feature called request sharing introduced in the sg v4 driver. It also uses the reserve request.

The terms command and request are used interchangeably in this driver and its documentation. Strictly speaking a command refers to a SCSI command (or more precisely a SCSI Command Descriptor Block (cdb)) as documented in the various SCSI command set standards available from T10 . An example of an important one of these is the "SCSI Primary Commands - 4" (usually known as: SPC-4). Quite often when a document refers to a SCSI command it implicitly also means the response (from the storage device) to that command.

The term request comes from the opposite direction: from the Linux kernel and its block layer. One of the fundamental structures in the Linux storage subsystem is 'struct request' which in the SCSI (sub-)subsystem is specialized to struct scsi_request which in turn is further specialized to struct sg_request by this driver. Why have so many structures representing basically the same thing? Two reasons: context (i.e. scsi_request and sg_request bind more information than struct request) and lifetime (e.g. sg_request "lives" longer than the other two).

One significant difference between the SCSI command sets and some other storage command sets is that is that SCSI command sets say nothing about near-end storage (e.g. where and how the response from a READ command will be placed in the computer's memory). The details are left to lower layers which in the case of SCSI may be SAS, SPI, iSCSI, SRP, USB (UAS(P) or block) and SATA (via SAT). This lack of near-end data management information in the command sets is especially useful when considering disk to disk copies. With request sharing, the user can still have maximum control over the copy while leaving the management of the associated data buffers returned from each READ and required for each paired WRITE, to be managed by this driver at the kernel level. An example of "maximum control" is the ability of the user to change the WRITE commands to WRITE AND VERIFY or WRITE ATOMIC commands. The driver can also maintain the timing relationship between each READ-WRITE pair while running many such pairs asynchronously (i.e. several pairs started at the same time). With multiple requests using the shared variable blocking method this can all seem like a single blocking synchronous call to the user. So the user is getting something approaching maximum performance with reduced complexity and reduced system overhead. The complexity of buffer management and maintaining the timing relationships is transferred to this driver. The reduction of system overhead is due to minimizing the copying of user data and the removal of complexity associated with techniques like using mmap() which needs to pin or fault pages in the computer's main memory.

This section is about user data: what starts inside blocks within a disk or SSD, and ends up in a user space application, typically via the read(2) system call or, vice versa, via the write(2) system call. The simplest and most common way to accomplish this is to utilize two buffers and two copies. The first buffer is inside the kernel and the second buffer is in the user space, allocated by an application to meet its needs. Why have two buffers? Block storage by its nature is arranged in logical blocks, typically 51 or 4096 bytes long. The kernel likes memory aligned to specific boundaries and allocated in units of pages. Pages are typically 4096 bytes long and the kernel prefers copies to be page aligned. for a read(2) the first copy is typically done with hardware assist (i.e. pushed by the device or its controller) using "Direct Memory Access" (DMA). The second copy can be thought of as like the C library's memcpy() call with the added complexity of crossing the kernel/user_space boundary. What is being described here, the author calls indirect IO, and it is the default for Unix, Linux and this sg driver.

Within the kernel, the SCSI subsystem is consider a child of the block subsystem. The sg driver can be thought of as interfacing to the user space above while interfacing to both the block subsystem and the SCSI mid-level below. The SCSI mid-level is the unifying component of the SCSI subsystem. One of the more important structures in the block subsystem has the (way too general) name 'struct request'. Objects of that structure carry SCSI commands (cdb_s) to the storage device (i.e. the request) and convey back the SCSI status, and optionally a sense buffer (i.e. the response). Not all SCSI commands carry user data (e.g. the SCSI TEST UNIT READY command), but those that do have either a data-in buffer (i.e. from storage device to the user space) or a data-out buffer. The block layer's 'struct bio' is the template for creating objects that carry the user data associated with a 'struct request' object, if that request carries user data. An important aspect of these two objects, is that they can have different lifetimes. A struct request object can be freed up (more likely: re-used) as soon as a driver is unformed about the response of the associated request. And especially in the case of a read(2) like operation the associated bio object needs to stay "alive" until the data it is carrying is conveyed (copied) back to the user space.

Now returning to those two buffers and two copies, this almost begs to be improved upon. That may lead to the following observations:

Couldn't the user space allocate its buffers used by read(2) and write(2) to meet storage and the kernel's alignment and size requirements?

Alternatively, couldn't there be a system call that returns address of the bio object to the user space (and maps the associated bio controlled pages into the user space as needed)?

Umm, what if the user space doesn't really need that data at all!? For example: a read(2) or write(2) being performed, might just be one side of a copy operation

The answer to point 1 is yes it can, and that is called direct IO, indicated generally in Unix with the O_DIRECT flag on an open(2) system call, or with the SGV4_FLAG_DIRECT_IO flag in this driver (on a request-by-request basis). Relating this back to the bio discussion above, the "backing store" to each bio object is the user space allocated buffers (and drivers like sg don't need to allocate any backing store for such direct IO operations). The answer to point 2 is yes it can, and this is what the mmap(2) system call does. In the case of the sg driver, it allocates the backing store for the bio objects, then via the mmap(2) system call returns the address of that backing store to the user space. And this mmap()-ed IO can be invoked on a request-by-request basis in the sg driver with the SG_FLAG_MMAP_IO flag.

That leaves point 3. In this case, no copies to the user space are required. Ideally the copy can be "thrown over the wall" (offloaded) to a disk array where both the source and destination reside. Ultimately something has to do the actual copy, so lets consider its situation. The computer inside the disk array probably has multiple disk controllers (HBAs) so the copy could be reduced to one DMA copy: from source to destination (disk(s)). Typically disk controllers are not set up to do that, they usually DMA between storage and main memory. Given that constraint then lets reduce the copy to two DMAs and one buffer: a DMA to and from a struct bio object controlled by this driver. To do that a single struct bio object needs to kept alive from the start of a READ operation until the corresponding WRITE command is finished. That is not easy to do with the block layer's API, but there is a simple work around. The sg driver provides (and manages) the backing store when struct bio objects are constructed, so it can use the same backing store for each pair of READ and WRITE commands. Also note how well this approach suits the SCSI command sets which don't bother themselves with "near-end" data management (e.g. scatter gather lists) but give a high level of control at the meta-data level (e.g. 'force unit access' and group number (categorizing the IO)). IOWs the command sets concentrate more on what is to be done rather than how to do it, compared to some other storage command sets. Specifically this driver can take care of the important detail: how the data gets from that READ to its paired WRITE. This is the theory behind request sharing outlined in several following sections.

These two forms: ioctl(sg_fd, SG_IO, ptr_to_v3_obj) and ioctl(sg_fd, SG_IO, ptr_to_v4_obj) can be used for submitting SCSI commands (requests) and waiting for the response before returning to the calling thread. This action is termed as synchronous or blocking in this driver. In Linux most block devices that use or can translate the SCSI command set also support the first form (i.e. the ioctl(2) that takes a pointer to a v3 interface object as its third argument). So this pseudo code will work: ioctl(open("/dev/sdc"), SG_IO, ptr_to_v3_obj) but not if the third argument is a ptr_to_v4_obj. Some storage related character devices (e.g. /dev/st2 and /dev/ses3) will also accept the first form.

Only two drivers currently support the second form (i.e. whose third argument is a ptr_to_v4_obj): this driver and the bsg driver.

It is important to understand that the use of ioctl(SG_IO) is only synchronous seen from the perspective of the calling thread/task/process. It is only the calling thread that waits for completion of the request. Any other thread or process submitting requests to the same or other devices associated with the sg driver will not be impeded by that wait. This assumes that the underlying devices can queue SCSI commands which most current SCSI devices are capable of doing. As an example: a large copy between two storage devices can be broken down into multiple copy segments, with each copy segment copying a comfortable amount of data (say 1 MByte) between source and destination; then multiple threads can each take a copy segment from a pool and fulfil them by doing a READ then a WRITE SCSI command. Each READ/WRITE pair of commands seems synchronous but overall the threads are doing asynchronous READs and WRITEs with respect to one another.

Apart from some special cases (one shown below), it isn't generally useful to mix synchronous and asynchronous commands/requests on the same thread. An asynchronous command/request (i.e. non-blocking) could be submitted followed by a second synchronous command which will go through to completion before it returns; then the first command's completion can be fetched. Care is taken within the driver so that an asynchronous completion, even if it is pending will not be incorrectly supplied as the result of a synchronous command.

The simplest way to issue SCSI commands to any device is with a synchronous ioctl(SG_IO). Asynchronous commands have some advantages (mainly performance) but that comes at the expense of more complexity for the user application. When a program is juggling multiple asynchronous submissions and completions it needs to track either pack_id, tag or a user pointer to correctly match completions with submissions. Since the sg driver maintains strong per file descriptor context, one way to simplify the matching problem is to have one file descriptor per submission/completion. However then multiple file descriptors need to be juggled, which is not so onerous.

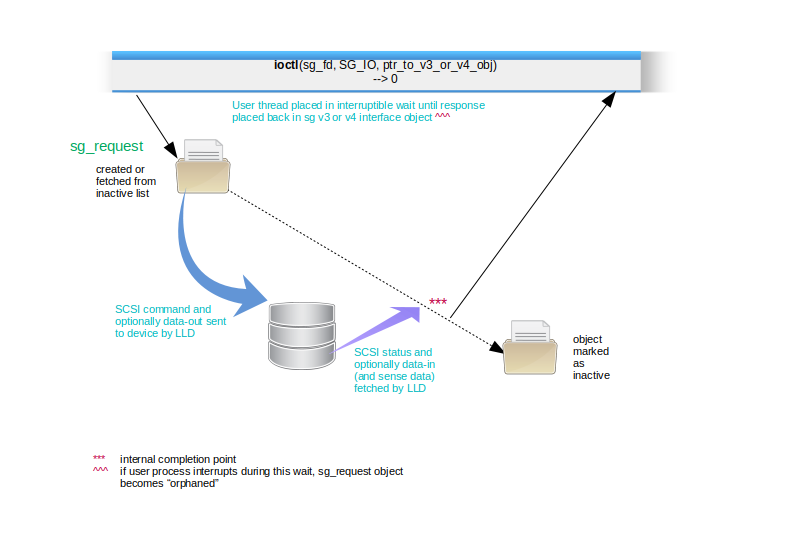

Figure 2: Synchronous (blocking) interface

In the diagram above a synchronous (i.e. blocking) ioctl(SG_IO) is shown. As a general rule the ioctl(2) will return -1 with a positive errno value if there is a problem creating the object of type sg_request in the top left of the diagram. Examples of this are syntax or contradictory information in the v3 or v4 interface object. Another cause could be lack of resources. Once the sg_request object is "inflight" any errors will be reported via the v3 or v4 interface object. As noted in the diagram the user thread is placed in a interruptible wait state, awaiting command/request completion. If the command takes some time the user may use a keyboard interrupt (e.g. control-C) to "kill" the containing process from another terminal (e.g. with kill(1)). This will cause the shown sg_request object to become an orphan. The default action is to remove orphan sg_request objects as soon as practical. However if the file descriptor has the "keep orphan" flag set (see ioctl(SG_SET_KEEP_ORPHAN) below) a further read(2) or ioctl(SG_IORECEIVE) will fetch the response information from the orphan which will then be marked as inactive and available for re-use.

The main context that a user space application controls in this driver is the file descriptor, shown as a sg_fd object in the earlier object tree diagram. Roughly speaking a file descriptor object is created when sg_fd=open(<sg_device_name>) succeeds and is destroyed by a close(sg_fd). Again, roughly speaking a file descriptor is confined to a user process. In multi-threaded programs it is often a good idea to have separate sg file descriptors in each thread. Some exceptions to these generalizations are discussed on the next section.

Another feature of the file descriptor object in the sg driver is that each one has a reserve request created at the same time as the file descriptor. Any new command/request on that file descriptor will use that reserve request if :

the reserve request is not already is use, and

the new command/request's data-in or data-out buffer size is non-zero, and

the file descriptor is not the read-side of a share (discussed in a later section)

When a command request is completed, its sg_request object is marked as inactive. So no sg_request objects are actually deleted (i.e. the memory they use being freed up) until the owning file descriptor is close(2)-d. In the case where there are multiple copies of the file descriptor (e.g. a forked process or due to dup(2)) then is the last close(2) that frees up all associated sg_request objects.

A sg_request object is long-lived and may handle multiple commands between when it is first created and its destruction when its owning file descriptor is close(2)-d. To manage this ,each sg_request object has a state machine with these states:

SG_RQ_INACTIVE: doing nothing, awaiting its next assignment

SG_RQ_INFLIGHT: command/request has been sent to the block layer, expecting to indication from lower layers that the command is complete.

SG_AWAIT_RCV: that indication of completion has arrived but it is in the context of an interrupt or other event, unlikely to be the context of the issuing thread (in the user space)

SG_RQ_BUSY: transitory state, used to protect transitions between the first three states being usurped by other pesky threads and kernel mechanisms such as an interrupt.